Just a few years ago, a Tesla autopilot car caused a fatal crash because it mistook a truck as an empty, clear sky. To us, this was a mistake even a toddler would not make. It was certainly a shock for the public to learn that the cutting edge technology made such an error. So how did it happen?

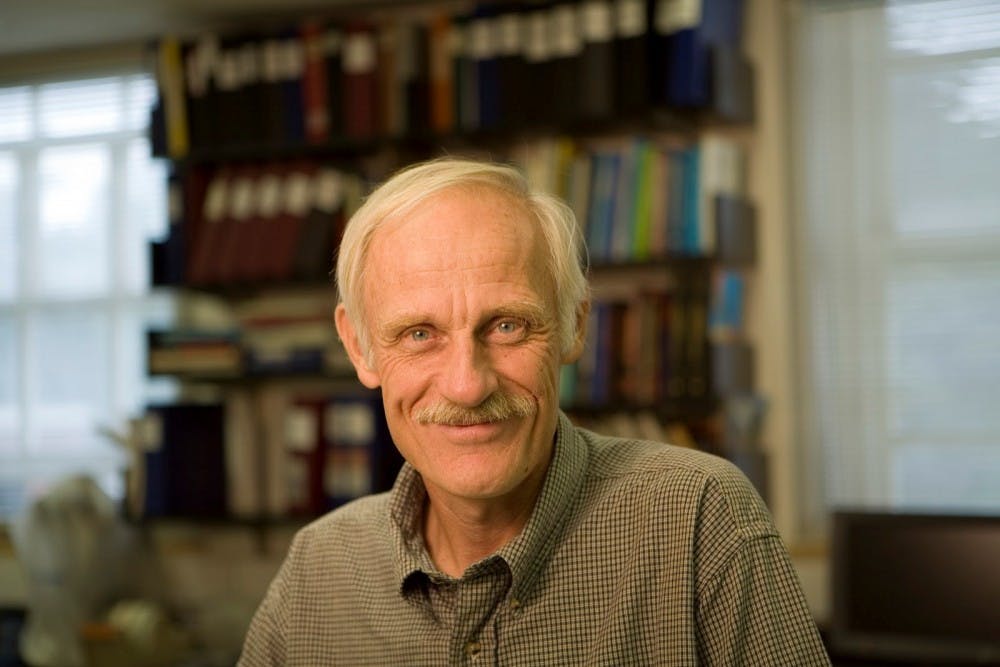

The research of Rudiger von der Heydt, a professor in the Neuroscience department who spent over 20 years at Hopkins studying the visual system, may provide a clue.

“We think that vision is so easy. When we open our eyes we see a world of subjects. But in fact, it is not easy,” he said in an interview with The News-Letter.

Seeing is effortless to humans. The world is full of stimulants, and we have evolved to become extremely good at receiving and processing all that we see. However, vision has been a big frustration in the Computer Interface Industry, von der Heydt explained.

The difficulty in figuring out vision is mainly how the brain processes all the information.

Von der Heydt stressed that the understanding of vision nowadays is still inefficient for any advanced visual technology to work in daily life, such as the autopilot system.

“What the eye receives is just an image, a million pixels. To make sense of that is really difficult,” he said.

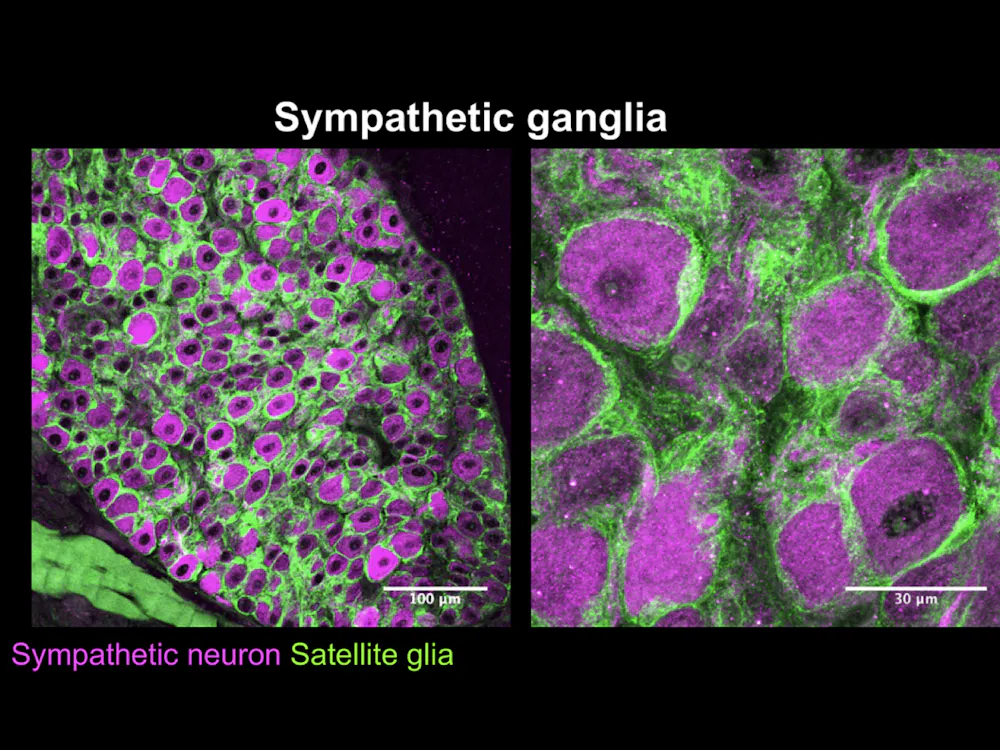

The focus of von der Heydt’s research is the underlying neural mechanisms of visual perception in the secondary visual cortex. More specifically, he studies the orientation to which a visual neuron responds.

It may come as a surprise to some: The neurons in the visual cortex do not respond to light, colors or shape, but instead to a specifically oriented line. In other words, the neuron will only become “excited” and respond when a line or a border is present in its receptive field. Furthermore, they will reach the maximum excitation stage when that line is positioned at a certain angle.

The recognition and response to borders are extremely important as humans orient themselves in a world full of objects, which are defined by edges and borders.

This was a Nobel prize-winning finding, and the two scientists awarded were David Hubel and Torsten Wiesel, who von der Heydt knew personally when he worked in a Neurology lab in Switzerland.

When asked why he became interested in the brain, von der Heydt said that it began when he was in high school and had deep questions about life.

“I was interested in computers and the brain... So I had some philosophical interests,” he said.

Von der Heydt started his training as an optical physicist in Germany and then moved on to obtain his doctorate degree in Switzerland working with clinical neurologist, Guenter Baumgartner, who was actually the first ever to record cells firing in cat’s visual cortex.

With von der Heydt’s extensive background in Physics and Neurology and his work under a world-renowned scientist, he was offered a position at Hopkins in 1993.

Building on the understanding of the visual neurons’ mechanisms, von der Heydt’s lab decided to take a closer look to the specific orientation of a neuron in the visual cortex.

After many years of work, his lab has found that the visual neurons not only respond better to a line at an angle, but they have a preference to which side of that line the object is on. This point can be illustrated by the following example: A neuron’s receptive field responds to a line that crosses the middle. If a picture is presented to one side of that line, then that neuron responds better. If a picture is presented to the other side of that line, then the neuron fails to respond.

Von der Heydt explained the novelty of this discovery.

“It is weird because it shows that the system knows where the object is. It knows that the local feature belongs to something,” he said. “So we call this border-ownership coding.”

His finding came as a surprise to both him and all those who read about it.

“How does the system know what is an object?” von der Heydt asked. “This is one of the big questions in computer vision. You have an array of pixels, but where are the objects? Where is foreground and where is background?”

This is the essential question that the technology nowadays is trying to answer. How do we know that the truck is a truck and the sky is the sky?

Border-ownership coding partially answers that question. When the visual neurons respond to the position of the object in relation to its preferred line, it perceives the object as “near” or “far.” This is one of the first steps in visual perception and in solving the essential question.

One of the reasons that humans can distinguish the truck from the sky is because we know that A truck is in front and the sky is the background. We would never make the mistake of mixing up the front and the back.

Von der Heydt’s findings partially explain the fast processing of objects’ locations. This creates the world that we see, which enables us to move around and act on the objects of our interest.

According to von der Heydt, object perception has far more than what object recognition entails.

“Object recognition is impressive. We have machines that can recognize a face, for example,” he said, “but recognition is... not everything.”

The perception of the location, depth and the borders of everything we see is much more complex and include a lot more neuronal mechanisms than simply recognizing an object.

Von der Heydt has spent his entire career studying the visual system and how it perceives the world. Although he has retired, many researchers, some of them Hopkins students, are dedicated to continuing his work.